Ruihong Qiu

邱瑞鸿 /`ray hong chill/

I am currently an Assistant Professor and an ARC DECRA fellow (2025-2028) in School of Electrical Engineering and Computer Science (EECS) at The University of Queensland (UQ). Here is my short bio.

My current research mainly focuses on:

- LLM post-training.

- Agentic RL.

- Diffusion LLM.

Previously, I worked on IR, RecSys, GNNs, and GFMs.

Recruitment

I am actively looking for (1-2) self-motivated PhD students in Year 2027, all fully funded!

- [For prospective PhD students] / [博士招生中文].

- [For master thesis, bachelor honours or Summer/Winter Research students at UQ].

- [For interns / visitors].

Recent News

-

10.2025 Our paper, “Beyond Static LLM Policies: Imitation-Enhanced Reinforcement Learning for Recommendation” is selected as Best Paper Finalist, ICDM 2025.

-

08.2024 ARC DECRA project funded, “Lifelong Paradigms for Versatile, Robust and Agile Recommender Systems” (2025-2028)

-

06.2024 Our team gives a talk, “Effective Representation Learning for Legal Case Retrieval”, at IR Seminar, the University of Glasgow. [slides]

-

05.2024 Our team gives a talk, “Effective Representation Learning for Legal Case Retrieval”, at THUIR, Tsinghua University. [slides]

- 03.2024 Give a talk, “Graph Learning Methods in Session-based Recommendations and Legal Case Retrieval”, at IRonGraphs Workshop at ECIR 2024. [slides]

- Past news

Selected Research

Google Scholar page includes the full publication list.

LLM Post-training

|

Break the Block: Dynamic-size Reasoning Blocks for Diffusion Large Language Models via Monotonic Entropy Descent with Reinforcement Learning

Yan Jiang, Ruihong Qiu, Zi Huang ICML 2026 arXiv / code We identify a monotonic entropy descent scenario in the block decoding for dLLMs's correct generation. We further develop a RL post-training method to achieve this descent with a dynamic size block mechanism. |

|

TRN-R1-Zero: Text-rich Network Reasoning via LLMs with Reinforcement Learning Only

Yilun Liu, Ruihong Qiu, Zi Huang ACL 2026 (Main, Oral) arXiv / code We introduce TRN-R1-Zero, a RL-based LLM post-training method for effective reasoning over text-rich networks, such as citation, hyperlink, social and co-purchase domains. |

|

Block-R1: Rethinking the Role of Block Size in Multi-domain Reinforcement Learning for Diffusion Large Language Models

Yan Jiang, Ruihong Qiu, Zi Huang Preprint arXiv / code We introduce a new dataset on block size for dLLMs and a new RL post-training frameworks for dLLMs. |

|

When to Commit? Towards Variable-Size Self-Contained Blocks for Discrete Diffusion Language Models

Danny Wang, Ruihong Qiu, Zi Huang Preprint arXiv We formally define future-aware and no-future criteria for self-contained blocks and a novel variable-size self-contained blocks methods for dLLMs. |

Out-of-distribution on Graphs

|

What Information Matters? Graph Out-of-Distribution Detection via Tri-Component Information Decomposition

Danny Wang, Ruihong Qiu, Guangdong Bai, Zi Huang ICML 2026 arXiv / code We propose TIDE, an information decomposition framework that separates feature, structure, and joint signals in graph neural networks, using an information bottleneck objective to improve OOD detection by filtering spurious information. |

|

GFMate: Empowering Graph Foundation Models with Pre-training-agnostic Test-time Prompt Tuning

Yan Jiang, Ruihong Qiu, Zi Huang ICML 2026 arXiv / code We propose GFMate, a pre-training-agnostic test-time prompt tuning framework that uses centroid and layer prompts to adapt GFMs by leveraging both labelled and unlabelled target data. |

|

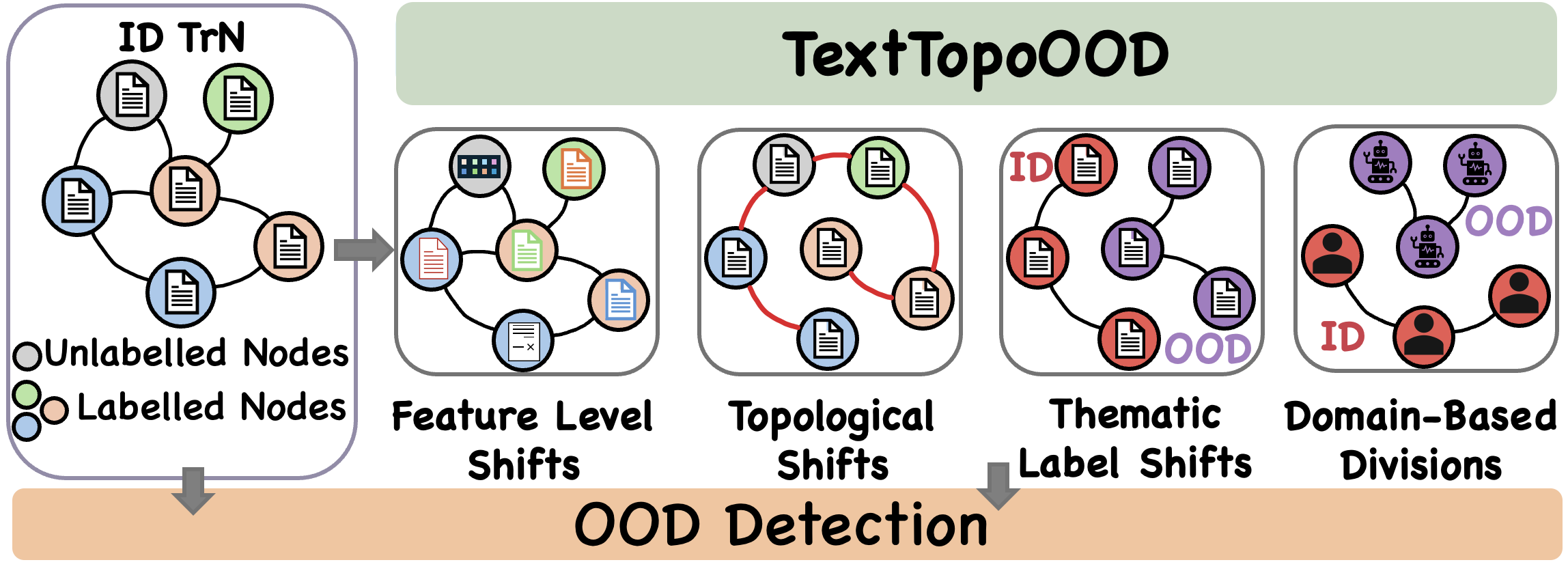

Text Meets Topology: Rethinking Out-of-distribution Detection in Text-Rich Networks

Danny Wang, Ruihong Qiu, Guangdong Bai, Zi Huang EMNLP 2025 (Main) arXiv / code We introduce TextTopoOOD, a framework for modeling diverse OOD scenarios on text-rich networks, and propose TNT-OOD, a novel detection method that captures the intricate interplay between text and topology. |

|

|

GOLD: Graph Out-of-Distribution Detection via Implicit Adversarial Latent Generation

Danny Wang, Ruihong Qiu, Guangdong Bai, Zi Huang ICLR 2025 (Spotlight) arXiv / OpenReview / code We propose the GOLD framework for graph OOD detection, an implicit adversarial learning pipeline with synthetic OOD exposure without pre-trained models. |

|

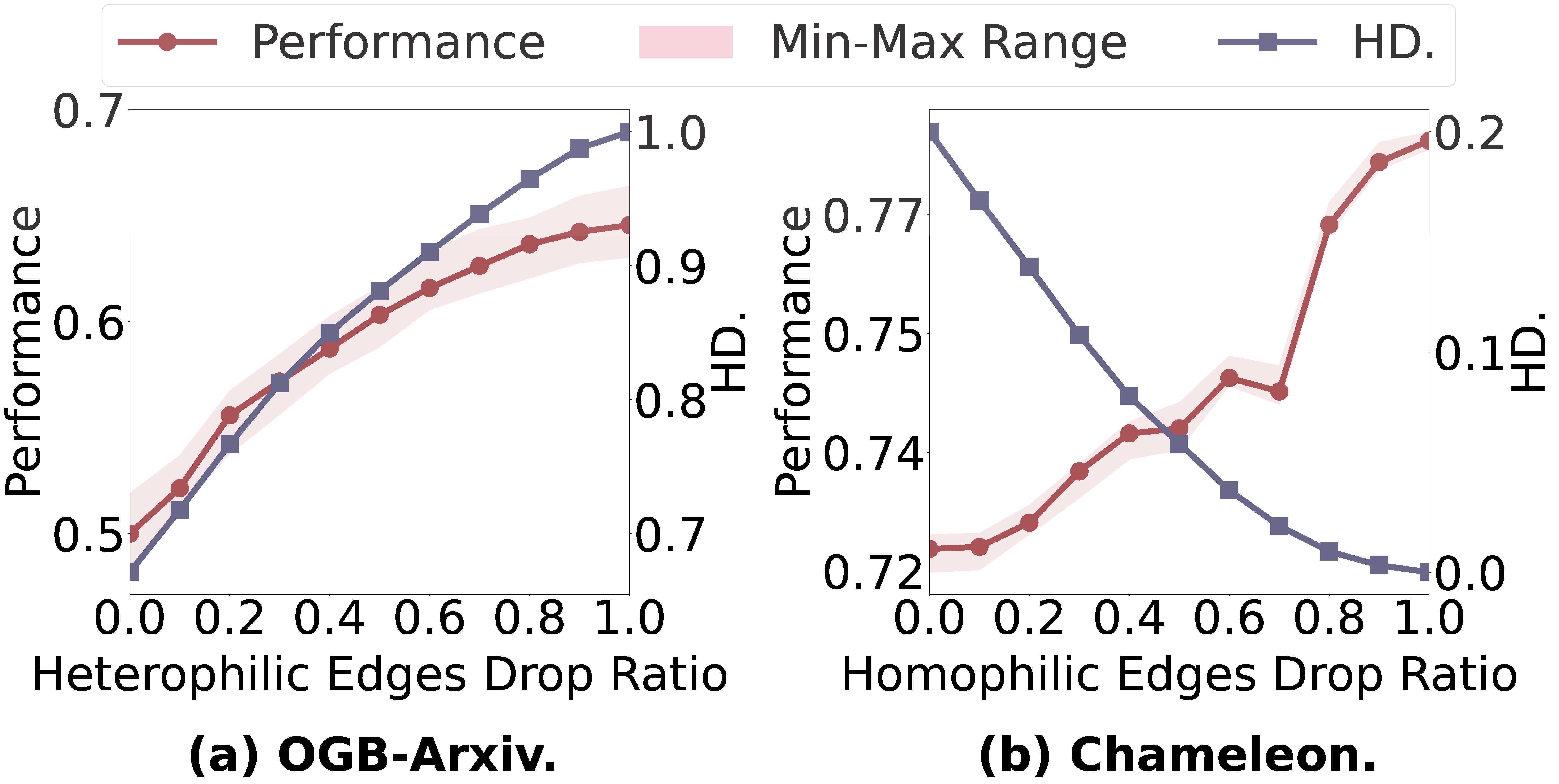

Does Homophily Help in Robust Test-time Node Classification?

Yan Jiang, Ruihong Qiu, Zi Huang WSDM 2026 (Oral) arXiv / code We propose the GrapHoST framework for graph learning to conduct test-time graph transformation based on homophily to enhance the robustness of graph models. |

Graph Condensation

|

GCondenser: Benchmarking Graph Condensation

Yilun Liu, Ruihong Qiu, Zi Huang CIKM 2025 arXiv / code We introduce a benchmark for graph condensation with a thorough methodology development method and an extensive evaluation protocol. |

|

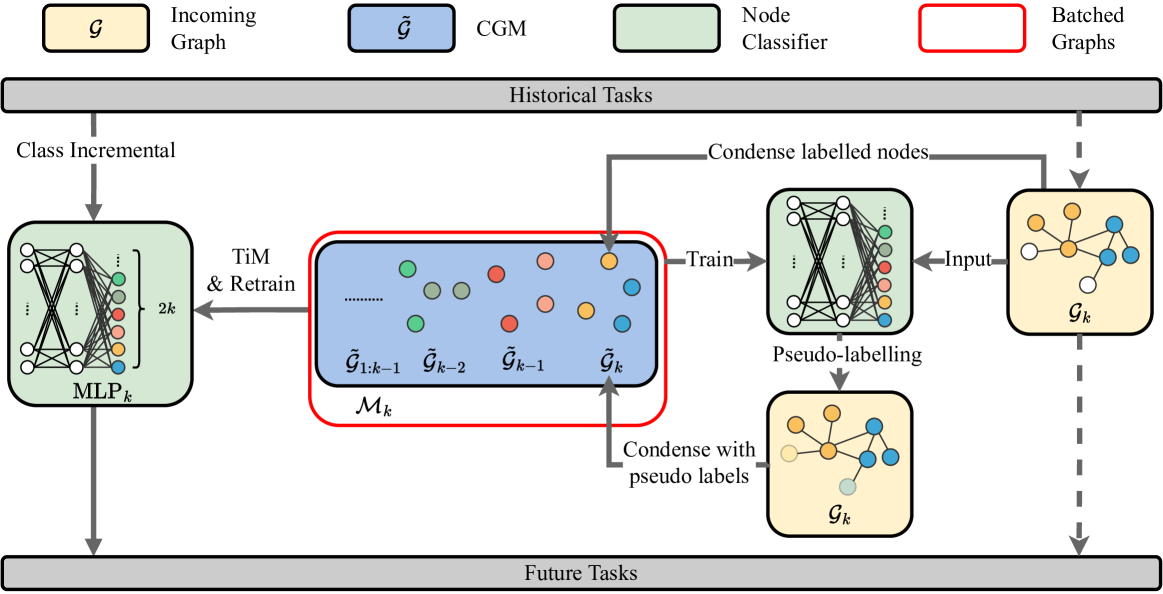

PUMA: Efficient Continual Graph Learning with Graph Condensation

Yilun Liu, Ruihong Qiu, Yanran Tang, Hongzhi Yin, Zi Huang TKDE 2024 arXiv / code We extend the Condense-and-Train (CaT) continual graph learning algorithm with a more efficient and effective, psudo-label guided memory bank (PUMA🐆) framework. |

|

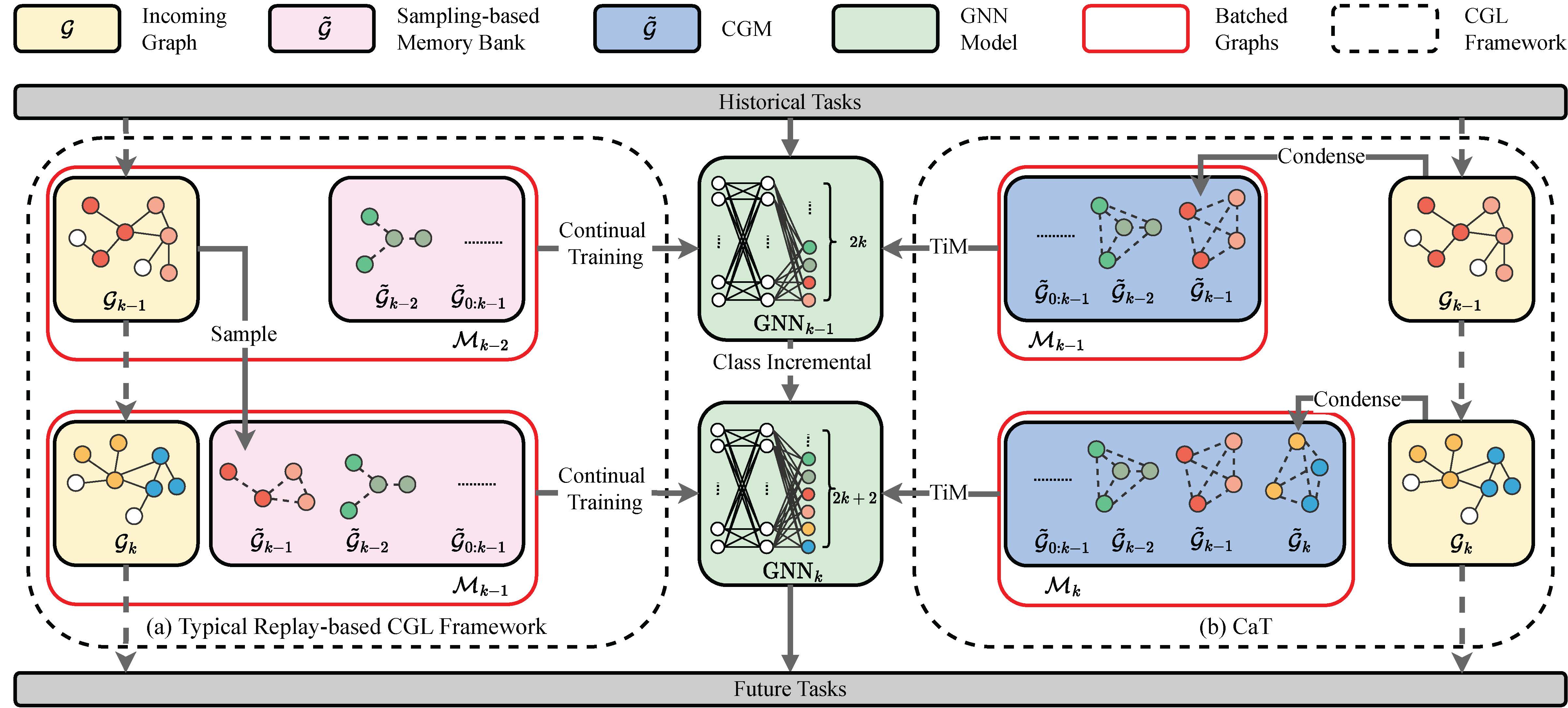

CaT: Balanced Continual Graph Learning with Graph Condensation

Yilun Liu, Ruihong Qiu, Zi Huang ICDM 2023 arXiv / code We introduce a Condense-and-Train (CaT🐱) memory-based continual graph learning algorithm using graph condensation to construct a more representative memory bank. And a Train-in-Memory continual learning scheme can further alleviate the imbalanced training issue in Class Incremental Learning. |

Legal Case Retrieval

|

Cassette: Case-to-Case Structural Distillation for Efficient Legal Case Retrieval

Yanran Tang, Ruihong Qiu, Hongzhi Yin, Xue Li, Zi Huang TOIS 2026 arXiv / code We develop a distillation strategy for case-to-case graph-based method (see our CaseLink below) acceleration for legal case retrieval. |

|

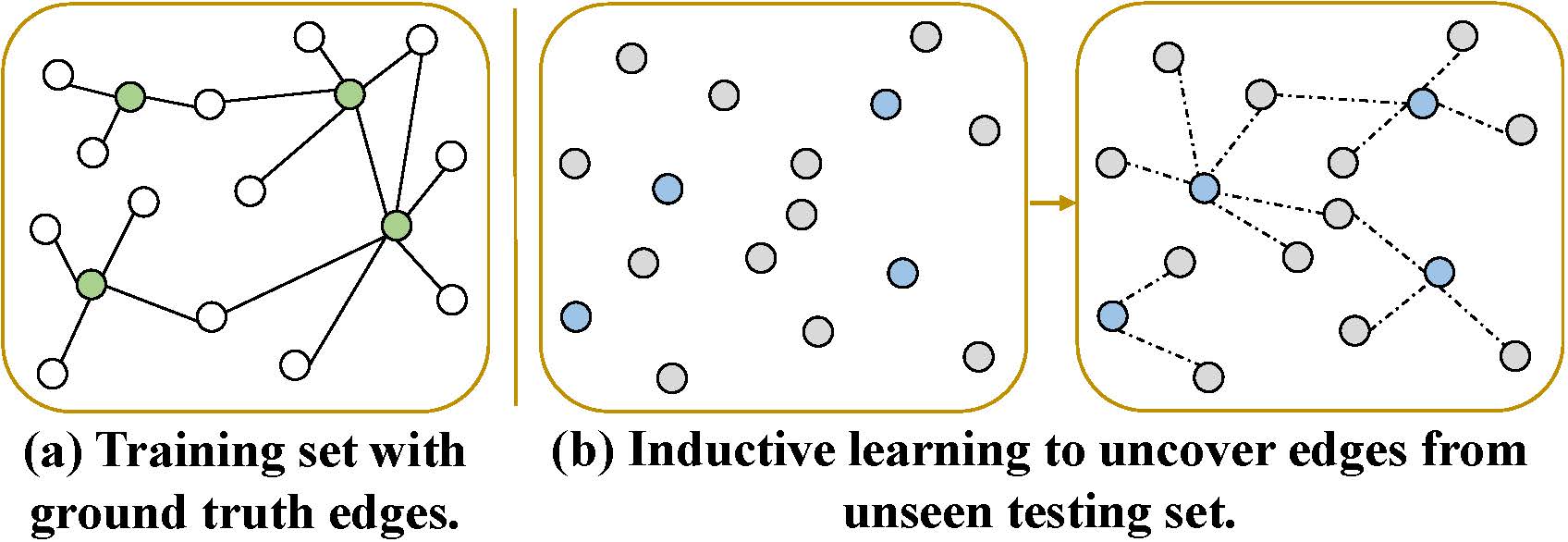

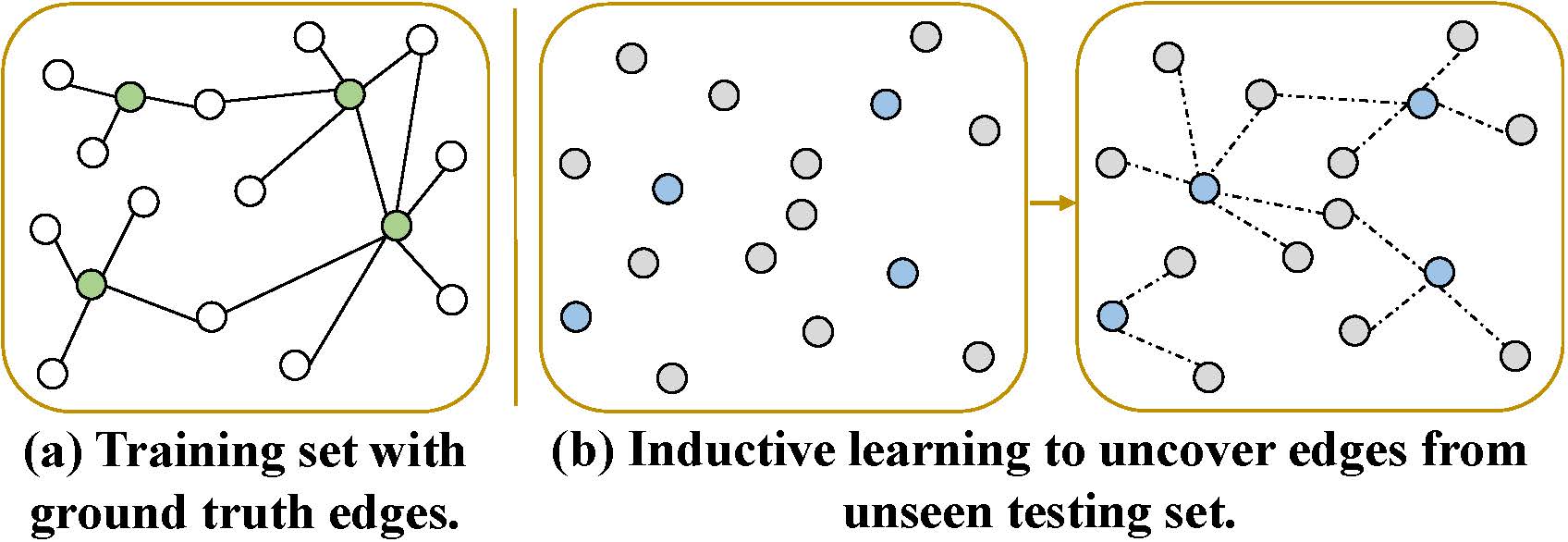

CaseLink: Inductive Graph Learning for Legal Case Retrieval

Yanran Tang, Ruihong Qiu, Hongzhi Yin, Xue Li, Zi Huang SIGIR 2024 arXiv / code We introduce an inductive graph learning paradigm for legal case retrieval to tackle the challenge of unseen testing query and candidate cases. |

|

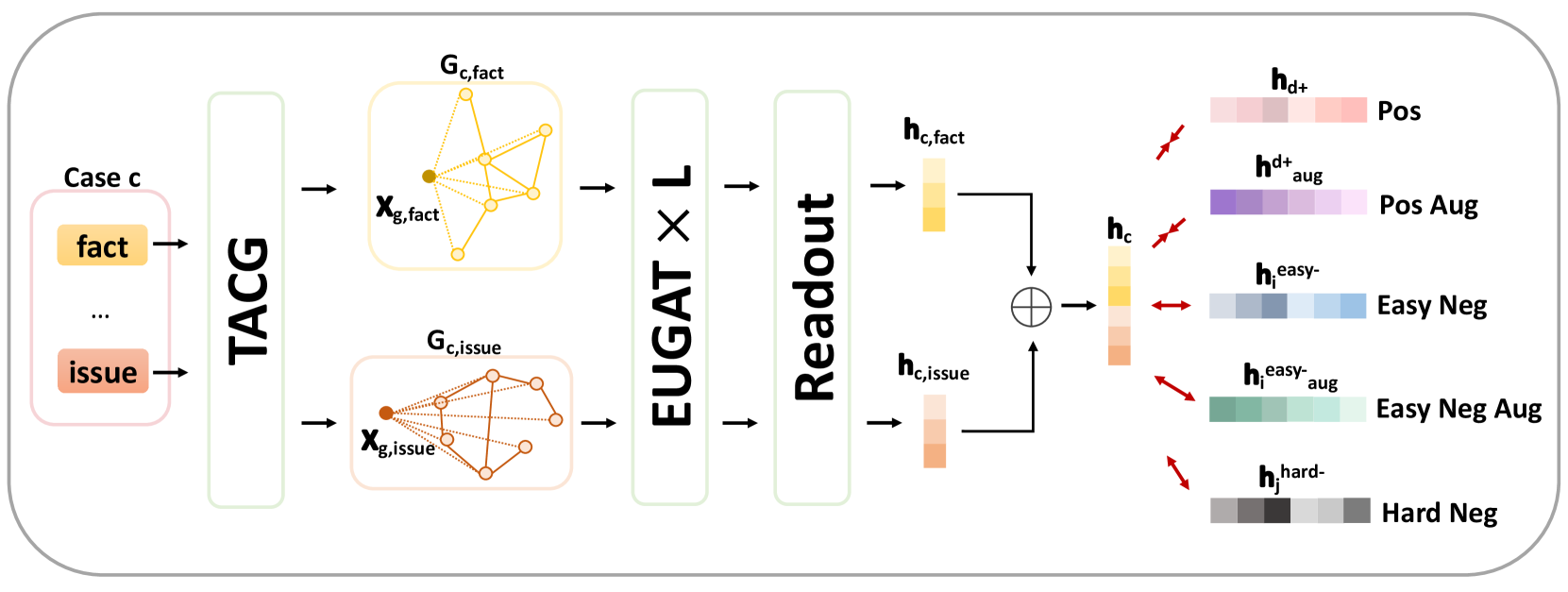

CaseGNN++: Graph Contrastive Learning for Legal Case Retrieval with Graph Augmentation

Yanran Tang, Ruihong Qiu, Yilun Liu, Xue Li, Zi Huang TOIS 2024 (under review) arXiv / code We introduce an extended CaseGNN++ method with graph augmentations based on the CaseGNN framework. |

|

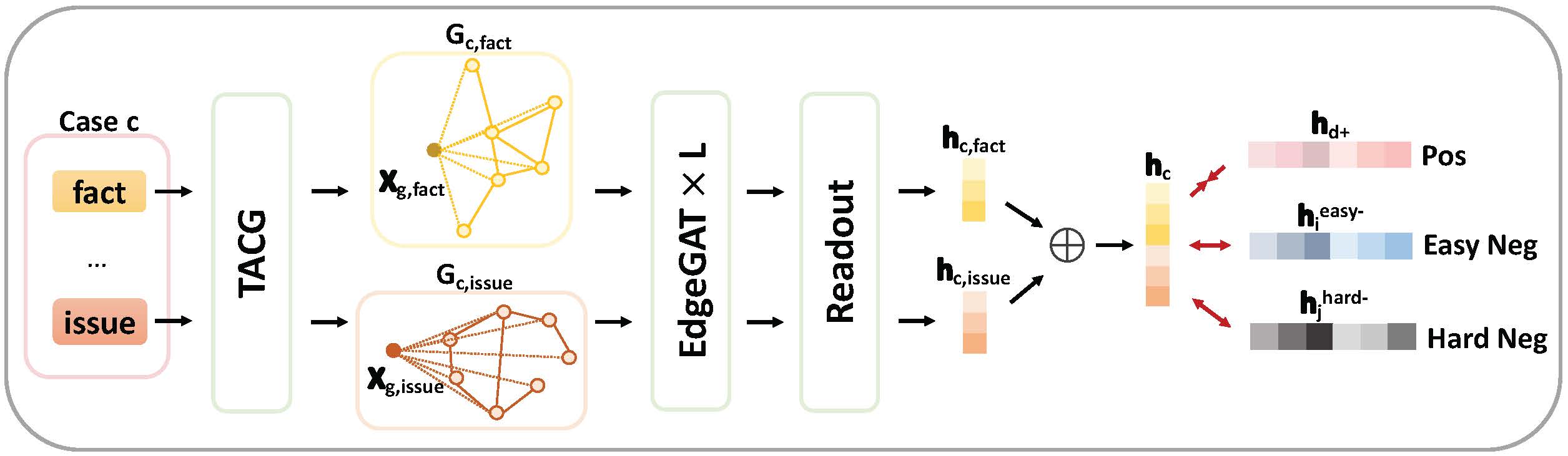

CaseGNN: Graph Neural Networks for Legal Case Retrieval with Text-Attributed Graphs

Yanran Tang, Ruihong Qiu, Yilun Liu, Xue Li, Zi Huang ECIR 2024 arXiv / code We introduce a structural modelling of law case for effective retrieval with the aid of summarisation from LLM and grpah neural networks. |

Team

- Xiaoguang Qiao, UQ EECS PhD (1.2026-, co-advise with Helen Huang and Yujun Cai)

- Xiaoqian Hu, UQ EECS PhD (10.2025-, co-advise with Sen Wang)

- Chenke Xu, UQ EECS PhD (7.2025-, co-advise with Xue Li)

- Boyu Luo, UQ EECS PhD (7.2024-, co-advise with Helen Huang and Guangdong Bai)

- Yan Jiang, UQ EECS PhD (1.2024-, co-advise with Helen Huang and Guangdong Bai)

- Danny Wang, UQ EECS PhD (1.2024-, co-advise with Helen Huang and Guangdong Bai)

- Hrishikesh Patel, UQ EECS PhD (4.2023, co-advise with Sen Wang)

- Yilun Liu, UQ EECS PhD (1.2023-, co-advise with Helen Huang)

Alumni

- Yi Zhang, UQ EECS PhD -> SMU Postdoc (7.2024-12.2025, co-advise with Sen Wang and Jiajun Liu)

- Jingyu Ge, UQ ACWEB PhD -> UQ ACWEB Postdoc (1.2022-12.2025, co-advise with Zhiguo Yuan, Helen Huang, and Jiuling Li)

Service

- Conference organisation: Area Chair at NLPCC’25; PhD Symposium Co-Chair at WWW’25; Program Committee Co-Chair at ADC’23; PhD Forum Co-Chair at AJCAI’23

MISC

I speak Cantonese, Mandarin and English.

Updated on 16/05/2026.